- Do final tweaks to the feature

- Submit deliverables Mon, Nov 9th 2359

- Wrap up the milestone Wed, Nov 11th 2359

- Submit the demo video Wed, Nov 11th 2359

- Prepare for the practical exam

- Attend the practical exam during lecture on Fri, Nov 13th 2359

1 Do final tweaks to the feature

- Do the final tweaks to the feature and the documentation. We strongly recommend not to do major changes to the product this close to the submission deadline.

2 Submit deliverables Mon, Nov 9th 2359

- Deadline for all v1.4 submissions is Mon, Nov 9th 2359 unless stated otherwise.

- Penalty for late submission:

-1 mark for missing the deadline (up to 2 hour of delay).

-2 for an extended delay (up to 24 hours late).

Penalty for delays beyond 24 hours is determined on a case by case basis.- Even a one-second delay is considered late, irrespective of the reason.

- For submissions done via LumiNUS, the submission time is the timestamp shown by LumiNUS.

- When determining the late submission penalty, we take the latest submission even if the same exact file was submitted earlier. Do not submit the same file multiple times if you want to avoid unnecessary late submission penalties.

- The whole team is penalized for problems in team submissions. Only the respective student is penalized for problems in individual submissions.

- Submit to LumiNUS folder we have set up, not to your project space. cs2103T students: documents should be submitted to both modules. It's not enough to submit to CS2101 side only.

- Follow submission instructions closely. Any non-compliance will be penalized. e.g. wrong file name/format.

- For pdf submissions, ensure the file is usable and hyperlinks in the file are correct. Problems in documents are considered bugs too e.g. broken links, outdated diagrams/instructions etc..

- Do not update the code during the 14 days after the deadline. Get our permission first if you need to update the code in the repo during that freeze period.

- You can update issues/milestones/PRs even during the freeze period.

- [CS2103T only] You can update the source code of the docs (but not functional/test code) if your CS2101 submission deadline is later than our submission deadline. However, a freeze period of 1-2 days is still recommended, so that there is a clear gap between the tP submission and subsequent docs updates.

- You can update the code during the freeze period if the change is related to a late submission approved by us.

- You can continue to evolve your repo after the freeze period.

Submissions:

To convert the UG/DG/PPP into PDF format, go to the generated page in your project's github.io site and use this technique to save as a pdf file. Using other techniques can result in poor quality resolution (will be considered a bug) and unnecessarily large files.

Ensure hyperlinks in the pdf files work. Your UG/DG/PPP will be evaluated using PDF files during the PE. Broken/non-working hyperlinks in the PDF files will be considered as bugs and will count against your project score. Again, use the conversion technique given above to ensure links in the PDF files work.

The icon indicates team submissions. Only one person need to submit on behalf of the team but we recommend that others help verify the submission is in order i.e., the responsibility for (and any penalty for problems in) team submissions are best shared by the whole team rather than burden one person with it.

The icon indicates individual submissions.

- Product:

- Do a release on GitHub, tagged as

v1.4. - Upload the jar file to LumiNUS.

File name:[team ID][product name].jare.g. [CS2103-T09-2][Contacts Plus].jar

- Do a release on GitHub, tagged as

Admin tP → Deliverables → Executable

- Should be an executable jar file.

- Should be i.e., it can be used by end-usersreleasable. While some features may be scheduled for later versions, the features in v1.4 should be good enough to make it usable by at least some of the target users.

- Also note the following constraint:

Admin tP Contstraints → Constraint-File-Size

Constraint-File-Size

The file sizes of the deliverables should not exceed the limits given below.

Reason: It is hard to download big files during the practical exam due to limited WiFi bandwidth at the venue:

-

JAR file: 100MB (Some third-party software -- e.g., Stanford NLP library, certain graphics libraries -- can cause you to exceed this limit)

-

PDF files: 15MB/file (Not following the recommended method of converting to PDF format can cause big PDF files. Another cause is using unnecessarily high resolution images for screenshots).

- Source Code: Push the code to GitHub and tag with the version number. Source code (please ensure the code reported by RepoSense as yours is correct; any updates to RepoSense config files or

@@authorannotations after the deadline will be considered a later submission). Note that the quality of the code attributed to you accounts for a significant component of your final score, graded individually.

Admin tP → Deliverables → Source Code

- Should match v1.4 deliverables i.e., executable, docs, website, etc.

- To be delivered as a Git repo. Ensure your GitHub team repo is updated to match the executable.

- User Guide: Convert to pdf and upload to LumiNUS.

File name:[TEAM_ID][product Name]UG.pdfe.g.[CS2103-T09-2][Contacts Plus]UG.pdf

Admin tP → Deliverables → User Guide

In UG/DG, using hierarchical section numbering and figure numbering is optional (reason: it's not easy to do in Markdown), but make sure it does not inconvenience the reader (e.g., use section/figure title and/or hyperlinks to point to the section/figure being referred to). Examples:

In the section Implementation given above ...

CS2103 does not require you to indicate author name of DG/UG sections (CS2101 requirements may differ). We recommend (but not require) you to ensure that the code dashboard reflect the authorship of doc files accurately.

- The main content you add should be in the

docs/UserGuide.mdfile (for ease of tracking by grading scripts). - Should cover all v1.4 features.

Ensure those descriptions match the product precisely, as it will be used by peer testers (inaccuracies will be considered bugs). - Optionally, can also cover future features. Mark those as

Coming soon. - Also note the following constraint:

Admin tP Contstraints → Constraint-File-Size

Constraint-File-Size

The file sizes of the deliverables should not exceed the limits given below.

Reason: It is hard to download big files during the practical exam due to limited WiFi bandwidth at the venue:

-

JAR file: 100MB (Some third-party software -- e.g., Stanford NLP library, certain graphics libraries -- can cause you to exceed this limit)

-

PDF files: 15MB/file (Not following the recommended method of converting to PDF format can cause big PDF files. Another cause is using unnecessarily high resolution images for screenshots).

- Developer Guide: submission is similar to the UG

File name:[TEAM_ID][product Name]DG.pdfe.g. [CS2103-T09-2][Contacts Plus]DG.pdf

Admin tP → Deliverables → Developer Guide

- The main content you add should be in the

docs/DeveloperGuide.mdfile (for ease of tracking by grading scripts).

If you use PlantUML diagrams, commit the diagrams as.pumlfiles in thedocs/diagramsfolder. - Should match the v1.4 implementation.

- OPTIONAL You can include proposed implementations of future features.

- Include an appendix named Instructions for Manual Testing, to give some guidance to the tester to chart a path through the features, and provide some important test inputs the tester can copy-paste into the app.

- Cover all user-testable features but no need to cover existing AB3 features if you did not touch them.

- No need to give a long list of test cases including all possible variations. It is upto the tester to come up with those variations.

- Information in this appendix should complement the UG. Minimize repeating information that are already mentioned in the UG.

- Inaccurate instructions will be considered bugs.

- We highly recommend adding an appendix named

Effortthat evaluators can use to estimate the total project effort.- Keep it brief (~1 page)

- Explain the difficulty level, challenges faced, effort required, and achievements of the project.

- Use AB3 as a reference point e.g., you can explain that while AB3 deals with only one entity type, your project was harder because it deals with multiple entity types.

DG Tips

- Aim to showcase your documentation skills. The stated objective of the DG is to explain the implementation to a future developer, but a secondary objective is to serve as evidence of your ability to document deeply-technical content using prose, examples, diagrams, code snippets, etc. appropriately. To that end, you may also describe features that you plan to implement in the future, even beyond v1.4 (hypothetically).

For an example, see the description of the undo/redo feature implementation in the AddressBook-Level3 developer guide. - Diagramming tools:

-

AB3 uses PlantUML (see the guide Using PlantUML @SE-EDU/guides for more info).

-

You may use any other tool too (e.g., PowerPoint). But if you do, note the following:

Choose a diagramming tool that has some 'source' format that can be version-controlled using git and updated incrementally (reason: because diagrams need to evolve with the code that is already being version controlled using git). For example, if you use PowerPoint to draw diagrams, also commit the source PowerPoint files so that they can be reused when updating diagrams later. -

Can i.e., automatically reverse engineered from the Java codeIDE-generated UML diagrams be used in project submissions? Not a good idea. Given are three reasons each of which can be reported by evaluators as 'bugs' in your diagrams, costing you marks:

- They often don't follow the standard UML notation (e.g., they add extra icons).

- They tend to include every little detail whereas we want to limit UML diagrams to important details only, to improve readability.

- Diagrams reverse-engineered by an IDE might not represent the actual design as some design concepts cannot be deterministically identified from the code. e.g., differentiating between multiplicities

0..1vs1, composition vs aggregation

-

- Use multiple UML diagram types. Following from the point above, try to include UML diagrams of multiple types to showcase your ability to use different UML diagrams.

- Keep diagrams simple. The aim is to make diagrams comprehensible, not necessarily comprehensive.

Ways to simplify diagrams:- Omit less important details. Examples:

- a class diagram can omit minor utility classes, private/unimportant members; some less-important associations can be shown as attributes instead.

- a sequence diagram can omit less important interactions, self-calls.

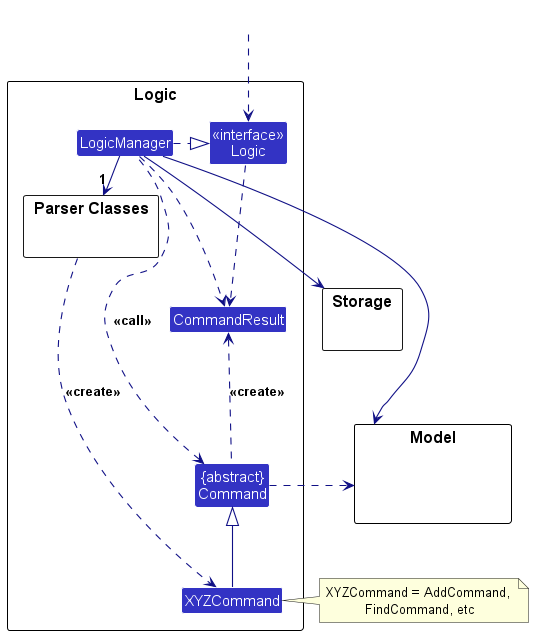

- Omit repetitive details e.g., a class diagram can show only a few representative ones in place of many similar classes (note how the AB3 Logic class diagram shows concrete

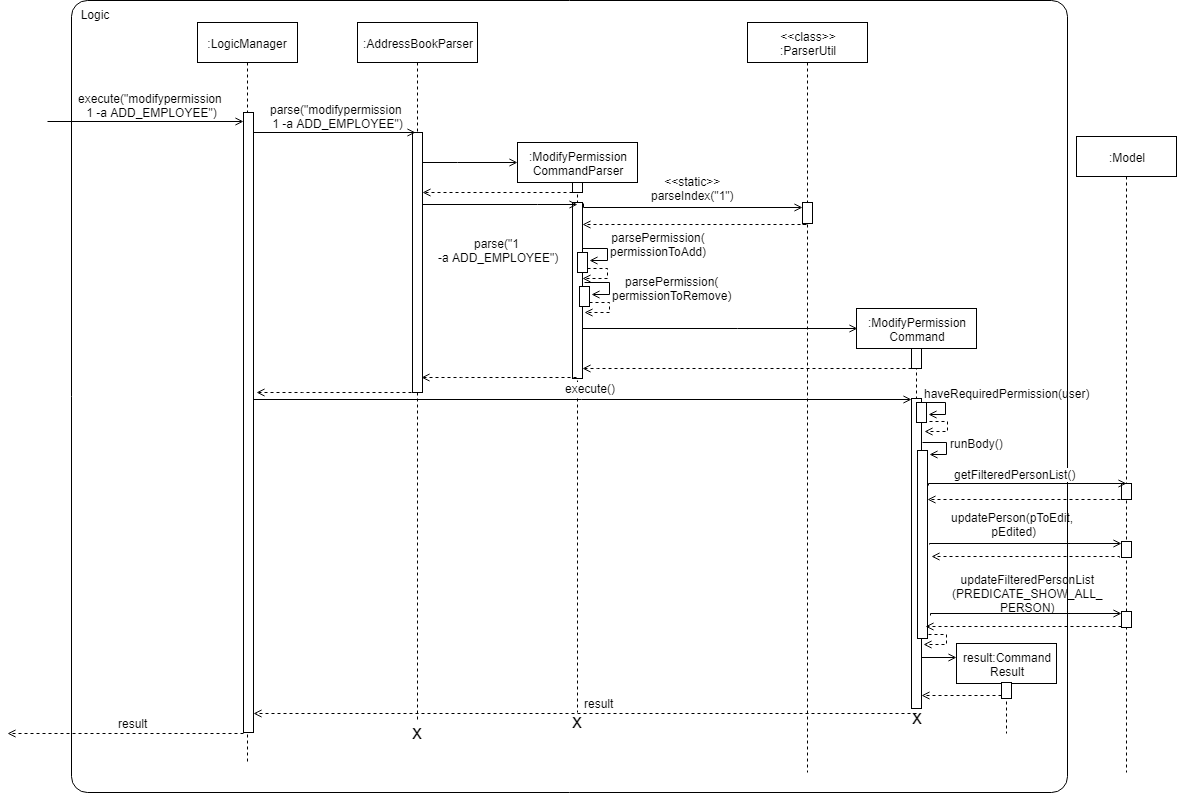

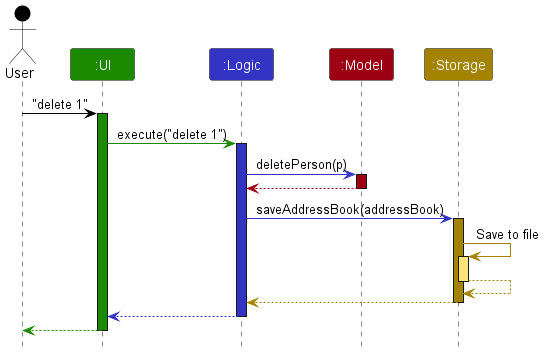

*Commandclasses using a placeholderXYZCommand). - Limit the scope of a diagram. Decide the purpose of the diagram (i.e., what does it help to explain?) and omit details not related to it. In particular, avoid showing lower-level details of multiple components in the same diagram unless strictly necessary e.g., note how the this sequence diagram shows only the detailed interactions within the Logic component i.e., does not show detailed interactions within the model component.

- Break diagrams into smaller fragments when possible.

- If a component has a lot of classes, consider further dividing into sub-components (e.g., a Parser sub-component inside the Logic component). After that, sub-components can be shown as black-boxes in the main diagram and their details can be shown as separate diagrams.

- You can use

refframes to break sequence diagrams to multiple diagrams. Similarly,rakes can be used to divide activity diagrams.

- Stay at the highest level of abstraction possible e.g., note how this sequence diagram shows only the interactions between architectural components, abstracting away the interactions that happen inside each component.

- Use visual representations as much as possible. E.g., show associations and navigabilities using lines and arrows connecting classes, rather than adding a variable in one of the classes.

- For some more examples of what NOT to do, see here.

- Omit less important details. Examples:

- Integrate diagrams into the description. Place the diagram close to where it is being described.

- Use code snippets sparingly. The more you use code snippets in the DG, and longer the code snippet, the higher the risk of it getting outdated quickly. Instead, use code snippets only when necessary and cite only the strictly relevant parts only. You can also use pseudo code instead of actual programming code.

- Resize diagrams so that the text size in the diagram matches the the text size of the main text of the diagram. See example.

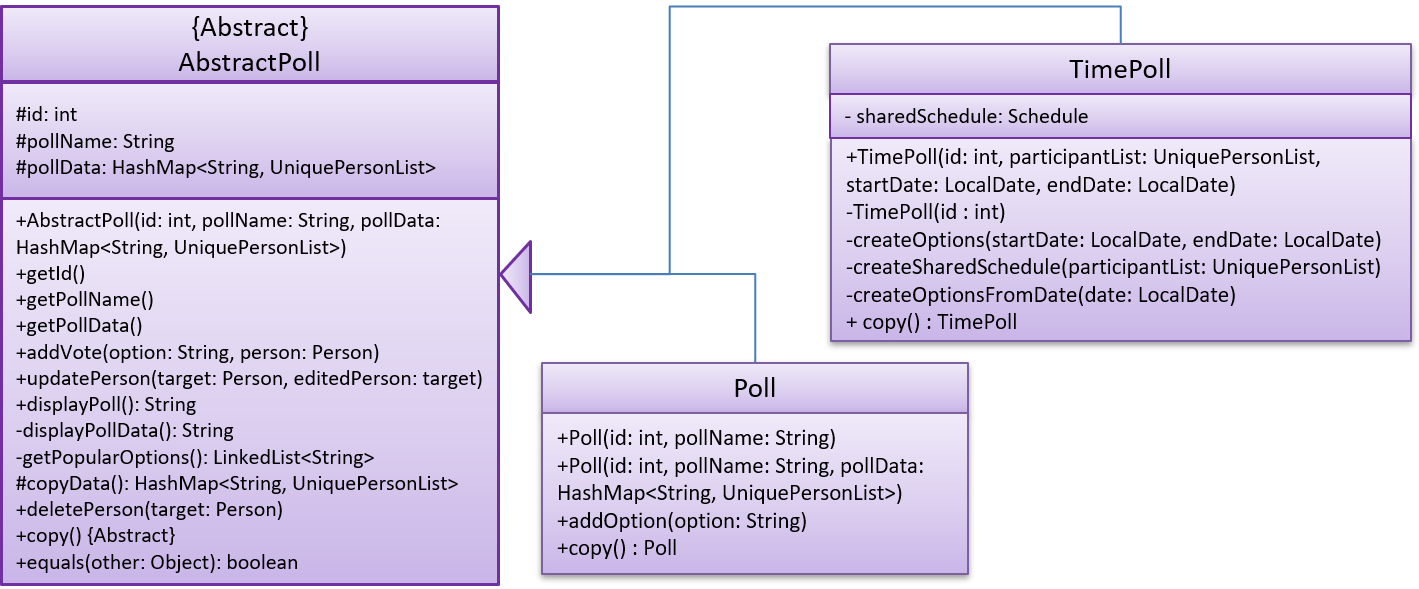

These class diagrams seem to have lot of member details, which can get outdated pretty quickly:

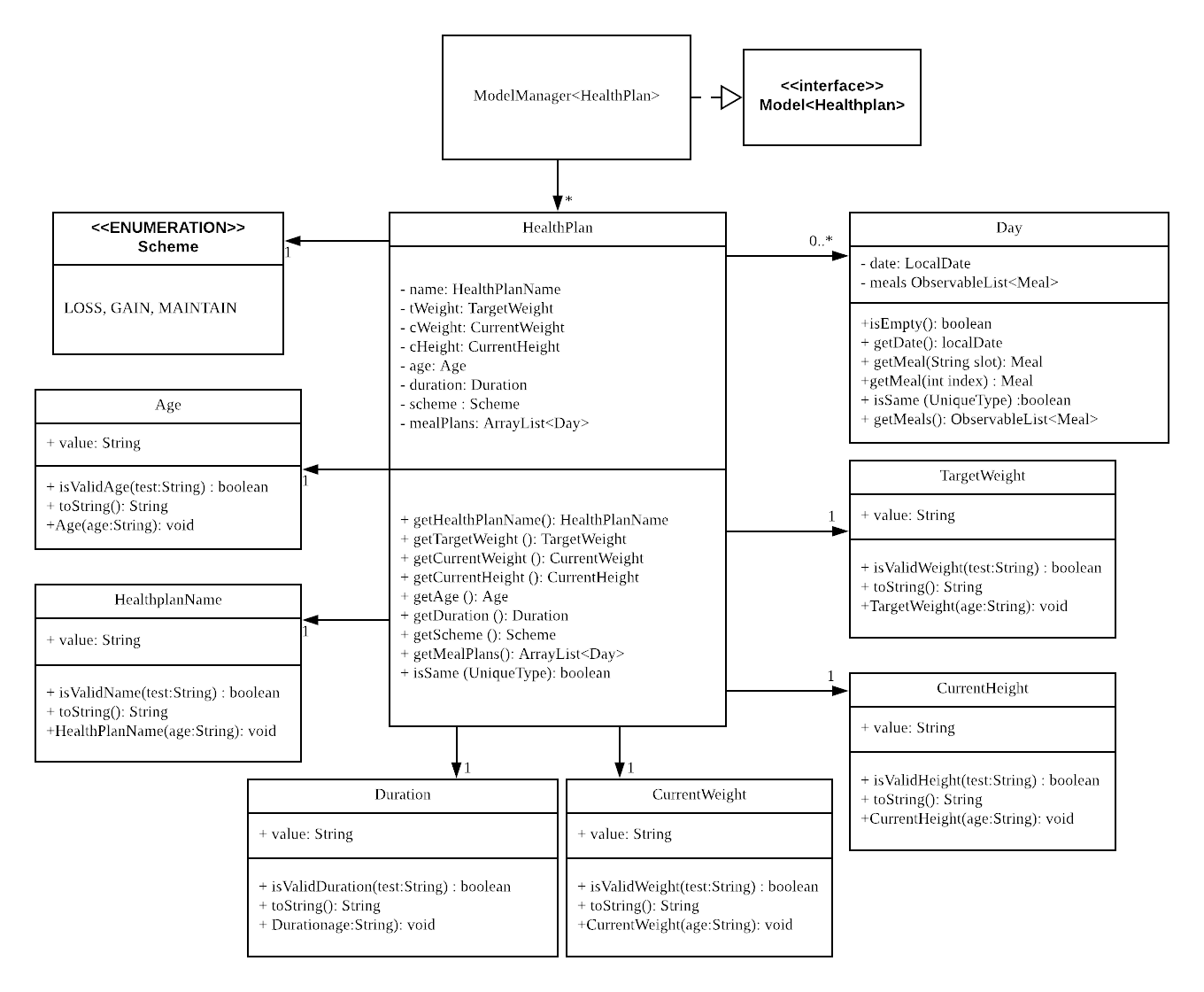

This class diagram seems to have too many classes:

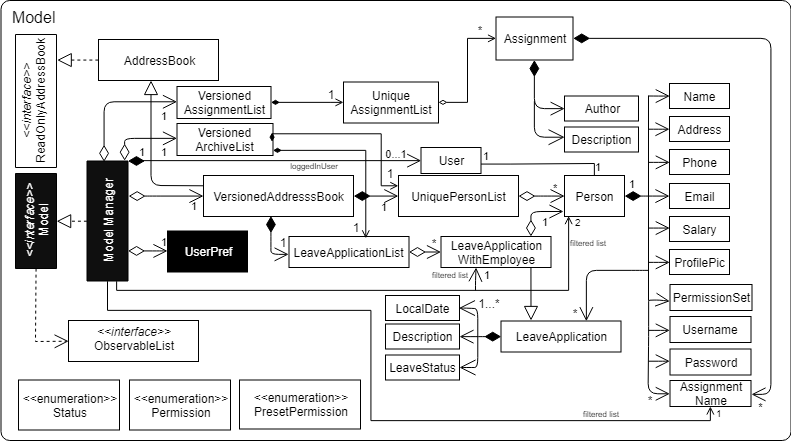

These sequence diagrams are bordering on 'too complicated':

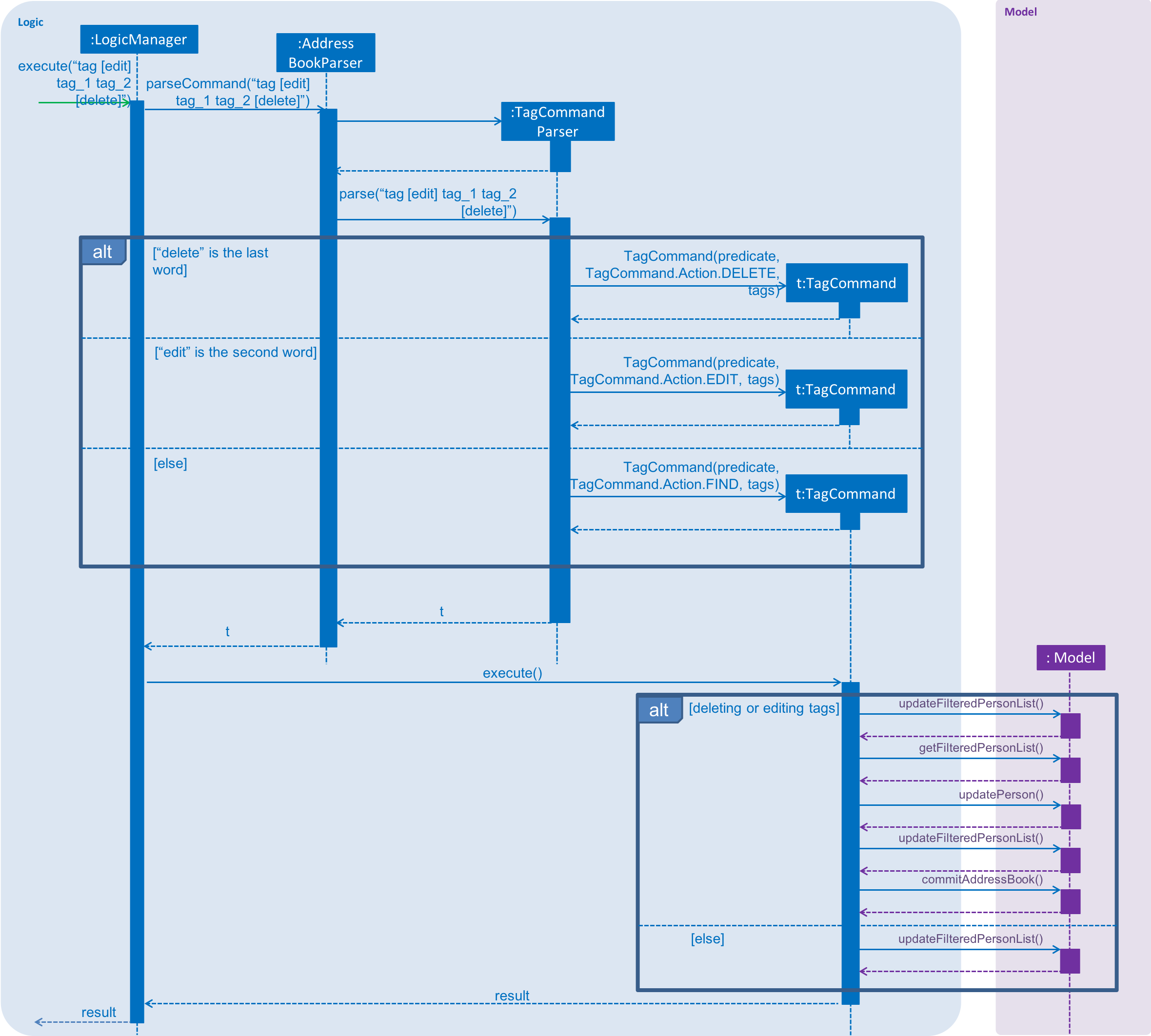

In this negative example, the text size in the diagram is much bigger than the text size used by the document:

It will look more 'polished' if the two text sizes match.

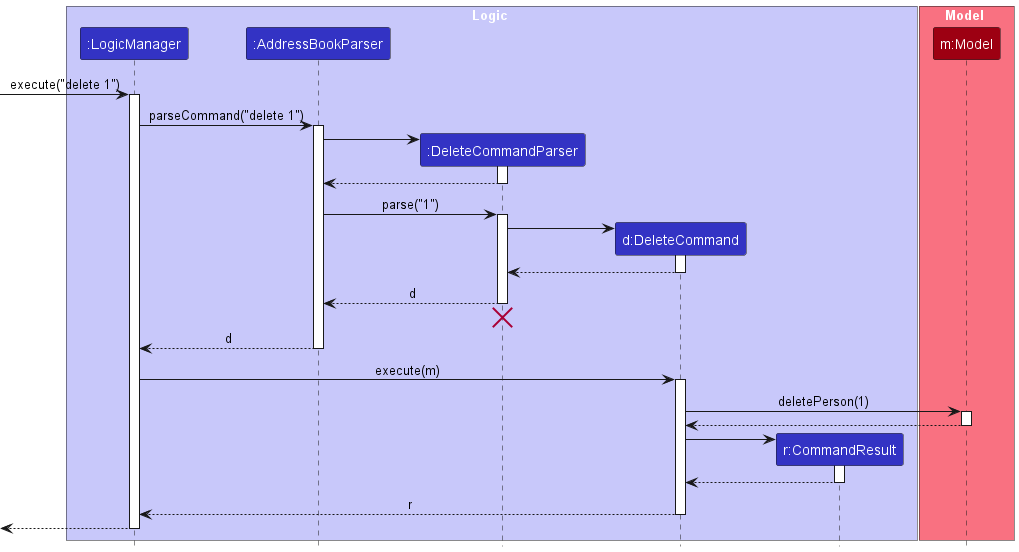

delete command

- Project Portfolio Page (PPP):

- PDF file: submission is similar to the UG

File name:[TEAM_ID][Your full Name as Given in LumiNUS]PPP.pdfe.g.[CS2103-T09-2][Leow Wai Kit, John]PPP.pdf

Use-in place of/if your name has it e.g.,Ravi s/o Veegan→Ravi s-o Veegan(reason: Windows does not allow/in file names) - HTML version: make available on

github.io

- PDF file: submission is similar to the UG

Admin tP → Deliverables → Project Portfolio Page

At the end of the project each student is required to submit a Project Portfolio Page.

PPP Objectives

- For you to use (e.g. in your resume) as a well-documented data point of your SE experience

- For evaluators to use as a data point for evaluating your project contributions

PPP Sections to include

- Overview: A short overview of your product to provide some context to the reader. The opening 1-2 sentences may be reused by all team members. If your product overview extends beyond 1-2 sentences, the remainder should be written by yourself.

- Summary of Contributions --Suggested items to include:

- Code contributed: Give a link to your code on tP Code Dashboard. The link is available in the Project List Page -- linked to the icon under your profile picture.

- Enhancements implemented: A summary of the enhancements you implemented.

- Contributions to documentation: Which sections did you contribute to the UG?

- Contributions to the DG: Which sections did you contribute to the DG? Which UML diagrams did you add/updated?

- Contributions to team-based tasks :

- Review/mentoring contributions: Links to PRs reviewed, instances of helping team members in other ways

- Contributions beyond the project team:

- Evidence of helping others e.g. responses you posted in our forum, bugs you reported in other team's products,

- Evidence of technical leadership e.g. sharing useful information in the forum

Team-tasks are the tasks that someone in the team has to do.

Examples of team-tasks

Here is a non-exhaustive list of team-tasks:

- Setting up the GitHub team org/repo

- Necessary general code enhancements e.g.,

- Work related to renaming the product

- Work related to changing the product icon

- Morphing the product into a different product

- Setting up tools e.g., GitHub, Gradle

- Maintaining the issue tracker

- Release management

- Updating user/developer docs that are not specific to a feature e.g. documenting the target user profile

- Incorporating more useful tools/libraries/frameworks into the product or the project workflow (e.g. automate more aspects of the project workflow using a GitHub plugin)

Keep in mind that evaluators will use the PPP to estimate your project effort. We recommend that you mention things that will earn you a fair score e.g., explain how deep the enhancement is, why it is complete, how hard it was to implement etc..

- [Optional] Contributions to the Developer Guide (Extracts): Reproduce the parts in the Developer Guide that you wrote. Alternatively, you can show the various diagrams you contributed.

- [Optional] Contributions to the User Guide (Extracts): Reproduce the parts in the User Guide that you wrote.

PPP Format

- File name (i.e., in the repo):

docs/team/githbub_username_in_lower_case.mde.g.,docs/team/goodcoder123.md - Follow the example in the AddressBook-Level3

To convert the UG/DG/PPP into PDF format, go to the generated page in your project's github.io site and use this technique to save as a pdf file. Using other techniques can result in poor quality resolution (will be considered a bug) and unnecessarily large files.

Ensure hyperlinks in the pdf files work. Your UG/DG/PPP will be evaluated using PDF files during the PE. Broken/non-working hyperlinks in the PDF files will be considered as bugs and will count against your project score. Again, use the conversion technique given above to ensure links in the PDF files work.

The icon indicates team submissions. Only one person need to submit on behalf of the team but we recommend that others help verify the submission is in order i.e., the responsibility for (and any penalty for problems in) team submissions are best shared by the whole team rather than burden one person with it.

The icon indicates individual submissions.

PPP Page Limit

| Content | Recommended | Hard Limit |

|---|---|---|

| Overview + Summary of contributions | 0.5-1 | 2 |

| [Optional] Contributions to the User Guide | 1 | |

| [Optional] Contributions to the Developer Guide | 3 |

- The page limits given above are after converting to PDF format. The actual amount of content you require is actually less than what these numbers suggest because the HTML → PDF conversion adds a lot of spacing around content.

- Product Website: Update website (home page,

Ui.png,AboutUs.mdetc.) on GitHub. Ensure the website is auto-published.

Admin tP → Deliverables → Product Website

When setting up your team repo, you would be configuring the GitHub Pages feature to publish your documentation as a website.

Website Home page

- Update to match your product.

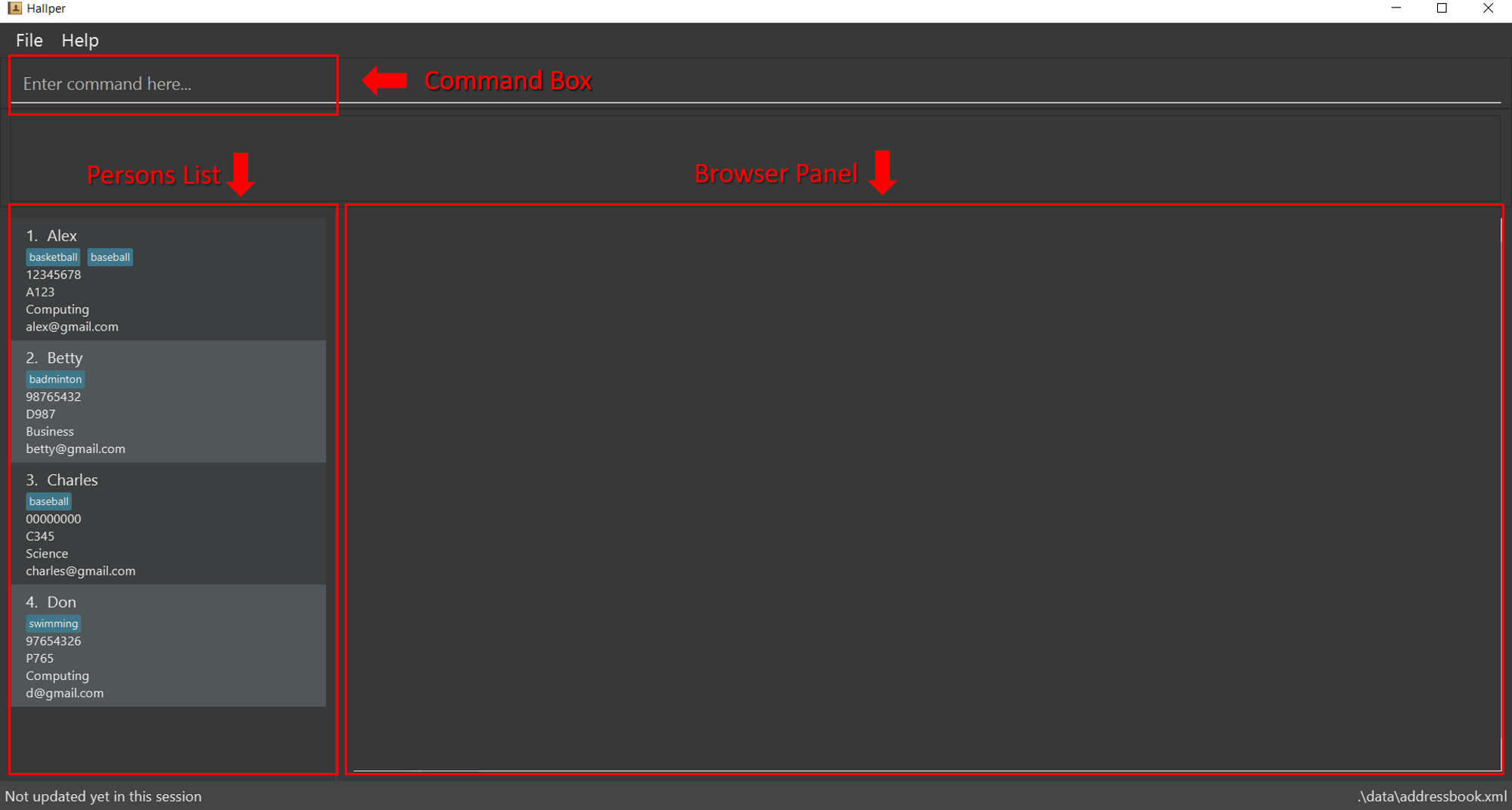

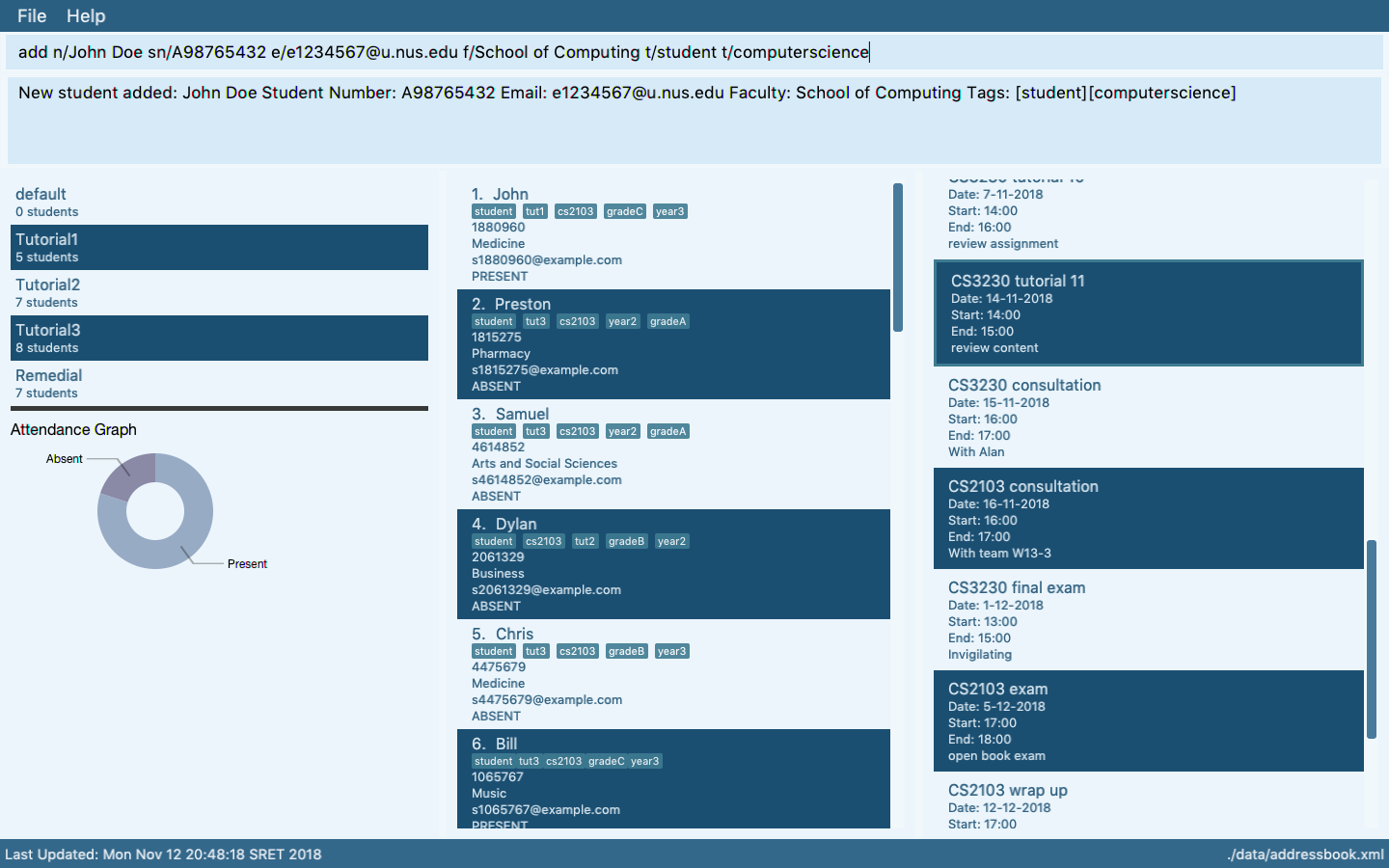

Website Ui.png

- Ensure the

Ui.pngmatches the current product

Some common sense tips for a good product screenshot

Ui.png represents your product in its full glory.

- Before taking the screenshot, populate the product with data that makes the product look good. For example, if the product is supposed to show profile photos, use real profile photos instead of dummy placeholders.

- If the product doesn't have nice line wrapping for long inputs/outputs, don't use such inputs/outputs for the screenshot.

- It should show a state in which the product is well-populated i.e., don't leave data panels largely blank.

- Choose a state that showcases the main features of the product i.e., the login screen is not usually a good choice.

- Take a clean screenshot with a decent resolution. Some screenshot tools can capture a specified window only. If your tool cannot do that, make sure you crop away the extraneous parts captured by the screenshot.

- Avoid annotations (arrows, callouts, explanatory text etc.); it should look like the product is in use for real.

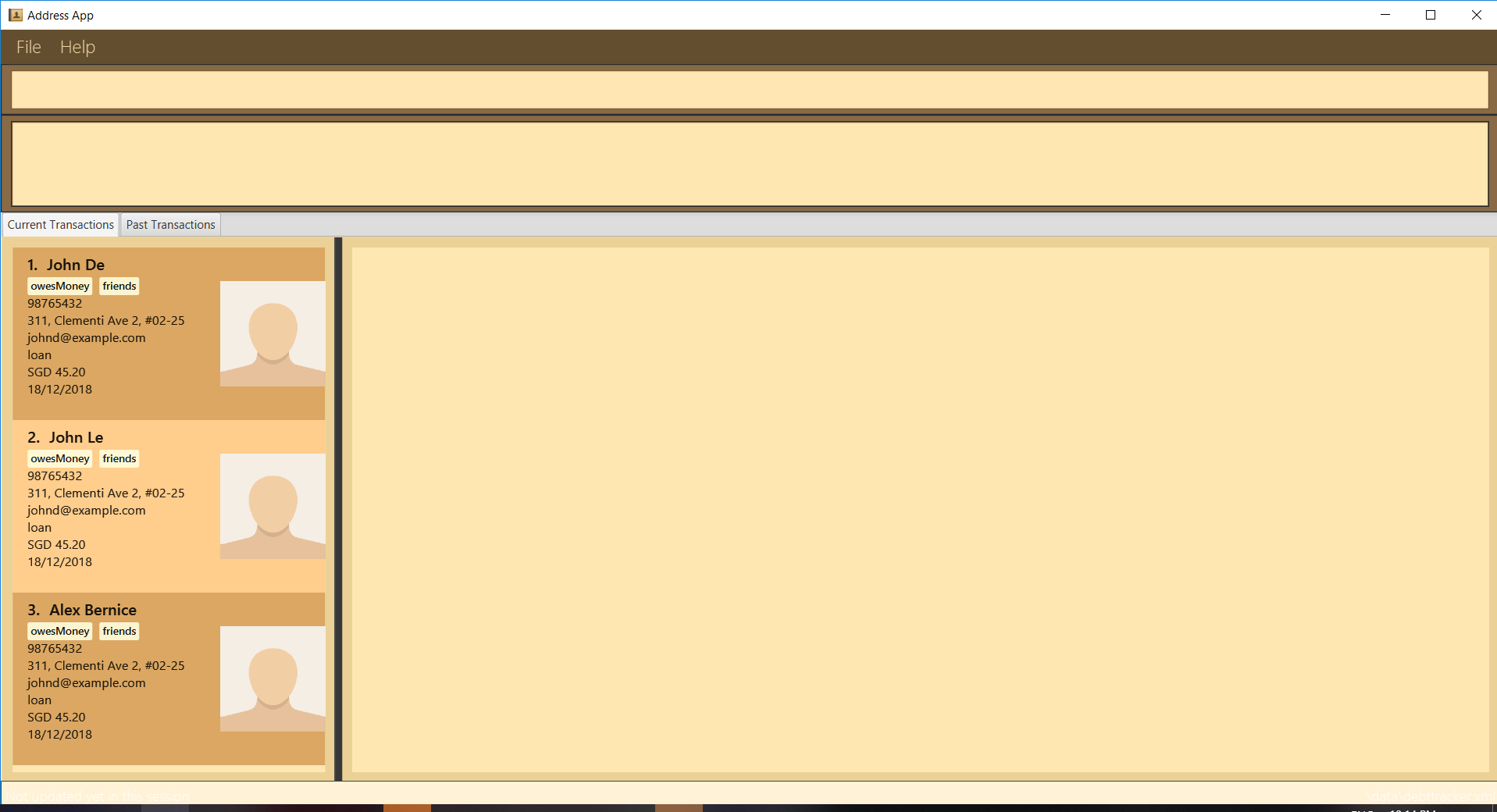

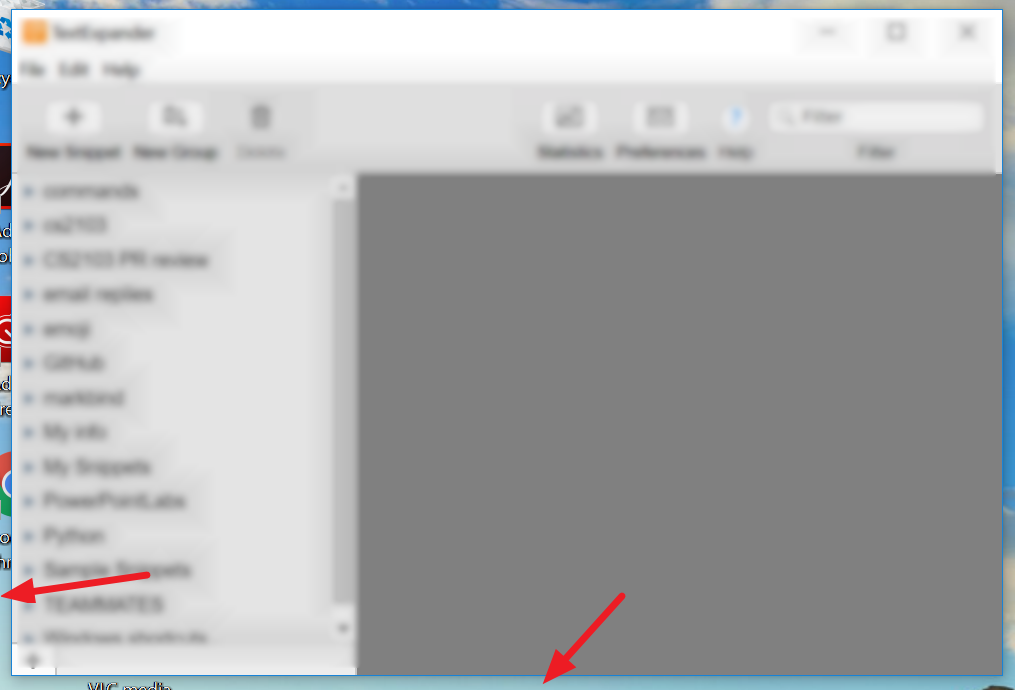

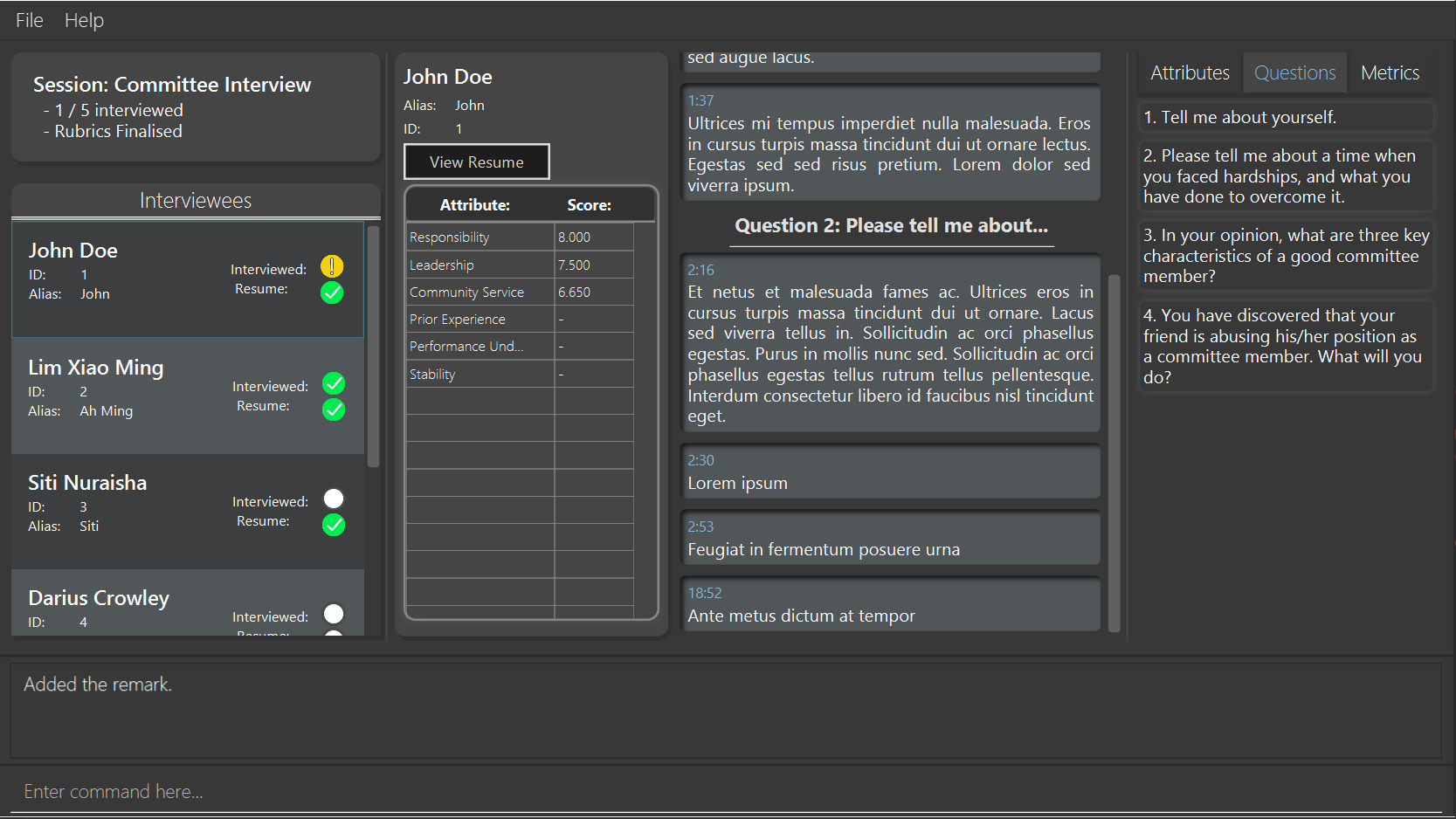

Examples

Reason: Distracting annotations.

Reason: Not enough data. Should have used real profile pictures instead of placeholder images.

Reason: screenshot not cropped cleanly (contains extra background details)

Website AboutUs Page

- Use a suitable profile photo.

The purpose of the profile photo is for the reader to identify you. Therefore, choose a recent individual photo showing your face clearly (i.e., not too small) -- somewhat similar to a passport photo. Given below are some examples of good and bad profile photos.

If you are uncomfortable posting your photo due to security reasons, you can post a lower resolution image so that it is hard for someone to misuse that image for fraudulent purposes. If you are concerned about privacy, you may use a placeholder image in place of the photo in module-related documents that are publicly visible.

- Include a link to each person's PPP page.

- Team member names match full names used by LumiNUS.

Website UG (Web Page)

- Should match the submitted PDF file.

Website DG (Web Page)

- Should match the submitted PDF file.

3 Wrap up the milestone Wed, Nov 11th 2359

- As usual, wrap up the milestone on GitHub. Note that the deadline for this is the same for everyone (i.e., does not depend on your tutorial).

4 Submit the demo video Wed, Nov 11th 2359

Admin tP → Deliverables → Demo

- Record a demo of all the product features, in a reasonable order.

- You may choose to screen record each feature and tie it up (see the "Suggested tools" below for options), OR

- Schedule + record a zoom meeting within the team, where you share your screens and do the demo.

- The quality of the demo will not affect marks as long as it serves the purpose (i.e., demonstrates the product features). Hence, don't waste too much time on creating the video.

- Audio explanations are strongly encouraged (but not compulsory) -- alternatively, you can switch between slides and the app to give additional explanations.

- Annotations and other enhancements to the video are optional (those will not earn any extra marks).

- All members taking part in the demo video is encouraged but not compulsory.

- File name:

[TEAM_ID][product Name].mp4e.g.[CS2103-T09-2][Contacts Plus].mp4 (other video formats are acceptable but use a format that works on all major OS'es). - File size: Recommended to keep below 200MB. You can use a low resolution as long as the video is in usable quality.

- Submission: Submit to LumiNUS (different folder).

- Deadline: 2 days after the main deadline

- Suggested tools:

Demo Duration

- Strictly 18 minutes for a 5-person team, 15 minutes for a 4-person team, 21 minutes for a 6-person team. Exceeding this limit will be penalized.

Demo Target audience

- Assume you are giving a demo to a higher-level manager of your company, to brief him/her on the current capabilities of the product. This is the first time they are seeing the new product you developed. The actual audience are the evaluators (the team supervisor and another tutor).

Demo Scope

- Start by giving an overview of the product so that the evaluators get a sense of the full picture early. Include the following:

- What is it? e.g., FooBar is a product to ensure the user takes frequent standing-breaks while working.

- Who is it for? e.g., It is for someone who works at a PC, prefers typing, and wants to avoid prolonged periods of sitting.

- How does it help? Give an overview of how the product's features help to solve the target problem for the target user

Here is an example:

Hi, welcome to the demo of our product FooBar. It is a product to ensure the user takes

frequent standing-breaks while working.

It is for someone who works at a PC, prefers typing, and wants to avoid prolonged periods

of sitting.

The user first sets the parameters such as frequency and targets, and then enters a

command to record the start of the sitting time, ... The app shows the length of the

sitting periods, and alerts the user if ...

...

- There is no need to introduce team members or explain who did what. Reason: to save time.

- Present the features in a reasonable order: Organize the demo to present a cohesive picture of the product as a whole, presented in a logical order.

- No need to cover design/implementation details as the manager is not interested in those details.

Demo Structure

- Demo the product using the same executable you submitted

- Use a sufficient amount of

Mr aaais not a realistic person namerealistic demo data. e.g at least 20 data items. Trying to demo a product using just 1-2 sample data creates a bad impression.

Demo Tips

- Plan the demo to be in sync with the impression you want to create. For example, if you are trying to convince that the product is easy to use, show the easiest way to perform a task before you show the full command with all the bells and whistles.

5 Prepare for the practical exam

Admin → tP → PE Overview

Objectives:

- The primary objective of the PE is to increase the rigor of project grading. Assessing most aspects of the project involves an element subjectivity. As the project counts for a large percentage of the final grade, it is not prudent to rely on evaluations of tutors alone as there can be significant variations between how different tutors assess projects. That is why we collect more data points via the PE so as to minimize the chance of your project being affected by evaluator-bias.

- PE is also used to evaluate your manual testing skills, product evaluation skills, effort estimation skills etc.

- Note that significant project components are not graded solely based on peer ratings. Rather, PE data are cross-validated with tutors' grades to identify cases that need further investigation. When peer inputs are used for grading, they are usually combined with tutors' grades with appropriate weight for each. In some cases ratings from team members are given a higher weight compared to ratings from other peers, if that is appropriate.

- PE is not a means of pitting you against each other. Developers and testers play for the same side; they need to push each other to improve the quality of their work -- not bring down each other.

Grading:

- Your performance in the practical exam will affect your final grade and your peers', as explained in Admin: Project Grading section.

- As such, we have put in measures to identify and penalize insincere/random evaluations.

- Also see:

Admin tP Grading → Notes on how marks are calculated for PE

Grading bugs found in the PE

- Of Developer Testing component, based on the bugs found in your code3A and System/Acceptance Testing component, based on the bugs found in others' code3B above, the one you do better will be given a 70% weight and the other a 30% weight so that your total score is driven by your strengths rather than weaknesses.

- Bugs rejected by the dev team, if the rejection is approved by the teaching team, will not affect marks of the tester or the developer.

- The penalty/credit for a bug varies based on the severity of the bug:

severity.High>severity.Medium>severity.Low>severity.VeryLow - The three types (i.e.,

type.FunctionalityBug,type.DocumentationBug,type.FeatureFlaw) are counted for three different grade components. The penalty/credit can vary based on the bug type. Given that you are not told which type has a bigger impact on the grade, always choose the most suitable type for a bug rather than try to choose a type that benefits your grade. - The penalty for a bug is divided equally among assignees.

- Developers are not penalized for duplicate bug reports they received but the testers earn credit for duplicate bug reports they submitted as long as the duplicates are not submitted by the same tester.

- i.e., the same bug reported by many testersObvious bugs earn less credit for the tester and slightly higher penalty for the developer.

- If the team you tested has a low bug count i.e., total bugs found by all testers is low, we will fall back on other means (e.g., performance in PE dry run) to calculate your marks for system/acceptance testing.

- Your marks for developer testing depends on the bug density rather than total bug count. Here's an example:

nbugs found in your feature; it is a big feature consisting of lot of code → 4/5 marksnbugs found in your feature; it is a small feature with a small amount of code → 1/5 marks

- You don't need to find all bugs in the product to get full marks. For example, finding half of the bugs of that product or 4 bugs, whichever the lower, could earn you full marks.

- Excessive incorrect downgrading/rejecting/marking as duplicatesduplicate-flagging, if deemed an attempt to game the system, will be penalized.

Admin → tP → PE-D/PE Preparation

-

Ensure that you have accepted the invitation to join the GitHub org used by the module. Go to https://github.com/nus-cs2103-AY2021S1 to accept the invitation.

-

Ensure you have access to a computer that is able to run module projects e.g. has the right Java version.

-

Download the latest CATcher and ensure you can run it on your computer.

If not using CATcher

Issues created for PE-D and PE need to be in a precise format for our grading scripts to work. Incorrectly-formatted responses will have to discarded. Therefore, you are not allowed to use the GitHub interface for PE-D and PE activities, unless you have obtained our permission first.

- Create a public repo in your GitHub account with the following name:

- PE Dry Run:

ped - PE:

pe

- PE Dry Run:

- Enable its issue tracker and add the following labels to it (the label names should be precisely as given).

Bug Severity labels:

severity.VeryLow: A flaw that is purely cosmetic and does not affect usage e.g., a typo/spacing/layout/color/font issues in the docs or the UI that doesn't affect usage.severity.Low: A flaw that is unlikely to affect normal operations of the product. Appears only in very rare situations and causes a minor inconvenience only.severity.Medium: A flaw that causes occasional inconvenience to some users but they can continue to use the product.severity.High: A flaw that affects most users and causes major problems for users. i.e., makes the product almost unusable for most users.

When applying for documentation bugs, replace user with reader.

Type labels:

type.FunctionalityBug: A functionality does not work as specified/expected.type.FeatureFlaw: Some functionality missing from a feature delivered in v1.4 in a way that the feature becomes less useful to the intended target user for normal usage. i.e., the feature is not 'complete'. In other words, an acceptance-testing bug that falls within the scope of v1.4 features. These issues are counted against the product design aspect of the project.type.DocumentationBug: A flaw in the documentation e.g., a missing step, a wrong instruction, typos

-

Have a good screen grab tool with annotation features so that you can quickly take a screenshot of a bug, annotate it, and post in the issue tracker.

- You can use Ctrl+V to paste a picture from the clipboard into a text box in bug report.

-

Download the product to be tested.

- After you have been notified which team to test (likely to be in the morning of PE-D day), download the jar file from the team's releases page.

- After you have been notified of the download location, download the zip file that bears your name.

6 Attend the practical exam during lecture on Fri, Nov 13th 2359

- Attend the practical test, to be done during the lecture.

Admin tP → Practical Exam

PE Overview

Objectives:

- The primary objective of the PE is to increase the rigor of project grading. Assessing most aspects of the project involves an element subjectivity. As the project counts for a large percentage of the final grade, it is not prudent to rely on evaluations of tutors alone as there can be significant variations between how different tutors assess projects. That is why we collect more data points via the PE so as to minimize the chance of your project being affected by evaluator-bias.

- PE is also used to evaluate your manual testing skills, product evaluation skills, effort estimation skills etc.

- Note that significant project components are not graded solely based on peer ratings. Rather, PE data are cross-validated with tutors' grades to identify cases that need further investigation. When peer inputs are used for grading, they are usually combined with tutors' grades with appropriate weight for each. In some cases ratings from team members are given a higher weight compared to ratings from other peers, if that is appropriate.

- PE is not a means of pitting you against each other. Developers and testers play for the same side; they need to push each other to improve the quality of their work -- not bring down each other.

Grading:

- Your performance in the practical exam will affect your final grade and your peers', as explained in Admin: Project Grading section.

- As such, we have put in measures to identify and penalize insincere/random evaluations.

- Also see:

Admin tP Grading → Notes on how marks are calculated for PE

Grading bugs found in the PE

- Of Developer Testing component, based on the bugs found in your code3A and System/Acceptance Testing component, based on the bugs found in others' code3B above, the one you do better will be given a 70% weight and the other a 30% weight so that your total score is driven by your strengths rather than weaknesses.

- Bugs rejected by the dev team, if the rejection is approved by the teaching team, will not affect marks of the tester or the developer.

- The penalty/credit for a bug varies based on the severity of the bug:

severity.High>severity.Medium>severity.Low>severity.VeryLow - The three types (i.e.,

type.FunctionalityBug,type.DocumentationBug,type.FeatureFlaw) are counted for three different grade components. The penalty/credit can vary based on the bug type. Given that you are not told which type has a bigger impact on the grade, always choose the most suitable type for a bug rather than try to choose a type that benefits your grade. - The penalty for a bug is divided equally among assignees.

- Developers are not penalized for duplicate bug reports they received but the testers earn credit for duplicate bug reports they submitted as long as the duplicates are not submitted by the same tester.

- i.e., the same bug reported by many testersObvious bugs earn less credit for the tester and slightly higher penalty for the developer.

- If the team you tested has a low bug count i.e., total bugs found by all testers is low, we will fall back on other means (e.g., performance in PE dry run) to calculate your marks for system/acceptance testing.

- Your marks for developer testing depends on the bug density rather than total bug count. Here's an example:

nbugs found in your feature; it is a big feature consisting of lot of code → 4/5 marksnbugs found in your feature; it is a small feature with a small amount of code → 1/5 marks

- You don't need to find all bugs in the product to get full marks. For example, finding half of the bugs of that product or 4 bugs, whichever the lower, could earn you full marks.

- Excessive incorrect downgrading/rejecting/marking as duplicatesduplicate-flagging, if deemed an attempt to game the system, will be penalized.

PE Preparation

- It's similar to,

PE-D Preparation

-

Ensure that you have accepted the invitation to join the GitHub org used by the module. Go to https://github.com/nus-cs2103-AY2021S1 to accept the invitation.

-

Ensure you have access to a computer that is able to run module projects e.g. has the right Java version.

-

Download the latest CATcher and ensure you can run it on your computer.

If not using CATcher

Issues created for PE-D and PE need to be in a precise format for our grading scripts to work. Incorrectly-formatted responses will have to discarded. Therefore, you are not allowed to use the GitHub interface for PE-D and PE activities, unless you have obtained our permission first.

- Create a public repo in your GitHub account with the following name:

- PE Dry Run:

ped - PE:

pe

- PE Dry Run:

- Enable its issue tracker and add the following labels to it (the label names should be precisely as given).

Bug Severity labels:

severity.VeryLow: A flaw that is purely cosmetic and does not affect usage e.g., a typo/spacing/layout/color/font issues in the docs or the UI that doesn't affect usage.severity.Low: A flaw that is unlikely to affect normal operations of the product. Appears only in very rare situations and causes a minor inconvenience only.severity.Medium: A flaw that causes occasional inconvenience to some users but they can continue to use the product.severity.High: A flaw that affects most users and causes major problems for users. i.e., makes the product almost unusable for most users.

When applying for documentation bugs, replace user with reader.

Type labels:

type.FunctionalityBug: A functionality does not work as specified/expected.type.FeatureFlaw: Some functionality missing from a feature delivered in v1.4 in a way that the feature becomes less useful to the intended target user for normal usage. i.e., the feature is not 'complete'. In other words, an acceptance-testing bug that falls within the scope of v1.4 features. These issues are counted against the product design aspect of the project.type.DocumentationBug: A flaw in the documentation e.g., a missing step, a wrong instruction, typos

-

Have a good screen grab tool with annotation features so that you can quickly take a screenshot of a bug, annotate it, and post in the issue tracker.

- You can use Ctrl+V to paste a picture from the clipboard into a text box in bug report.

-

Download the product to be tested.

- After you have been notified which team to test (likely to be in the morning of PE-D day), download the jar file from the team's releases page.

- After you have been notified of the download location, download the zip file that bears your name.

PE Phase 1: Bug Reporting

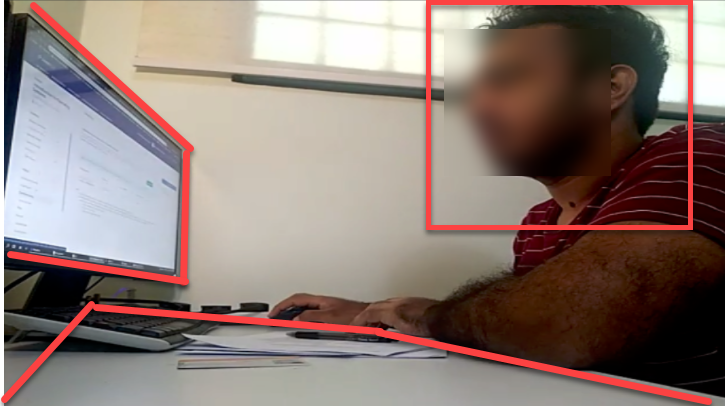

PE Phase 1 will conducted under exam conditions. We will be following the SoC's E-Exam SOP, combined with the deviations/refinements given below. Any non-compliance will be dealt with similar to a non-compliance in the final exam.

- Proctoring will be done via Zoom. No admission if the following requirements are not met.

- You need two Zoom devices (PC: chat, audio

video, Phone: video,audio), unless you have an external web cam for your PC. - Add your

[PE_seat_number]in front of the first name of your Zoom display name, in your Zoom devices. Seat numbers can be found in here. e.g.,[M48] John Doe(M18is the seat number)[M48][PC] John Doe(for the PC, if using a phone as well)

- Set your camera so that all the following are visible:

- your head (side view)

- the computer screen

- the work area (i.e., the table top)

- You need two Zoom devices (PC: chat, audio

- Join the Zoom waiting room 15-30 minutes before the start time. Admitting you to the Zoom session can take some time.

- In case of Zoom outage, we'll fall back on MS Teams (MST). Make sure you have MST running and have joined the matching MST Team

CS2103_AY2021S1_12pm_lectureorCS2103_AY2021S1_4pm_lecture. - Recording the screen is not required.

- You are allowed to use head/ear phones.

- Only one screen is allowed.

- Do not use the public chat channel to ask questions from the prof. If you do, you might accidentally reveal which team you are testing.

- Do not use more than one CATcher instance at the same time. Our grading scripts will red-flag you if you use multiple CATcher instances in parallel.

- Use MS Teams (not Zoom) private messages to communicate with the prof. Fall back on Zoom chat only if you didn't receive a reply via MST.

- Do not view video Zoom feeds of others while the testing is ongoing. Keep the video view minimized.

- During the bug reporting periods (i.e., PE Phase 1 - part I and PE Phase 1 - part II), do not use websites/software not in the list given below. In particular, do not visit GitHub. However, you are allowed to visit pages linked in the UG/DG for the purpose of checking if the link is correct. If you need to visit a different website or use another software, please ask for permission first.

- Website: LumiNUS

- Website: Module website (e.g., to look up PE info)

- Software: CATcher, any text editor, any screen grab software

- Software: PDF reader (to read the UG/DG or other references such as the textbook)

- Do not use any other software running in the background e.g., telegram chat

- This is a manual testing session. Do not use any test automation tools or custom scripts.

- When, where: Week 13 lecture slot. Use the same Zoom link used for the regular lecture.

PE Phase 1 - Part I Product Testing [60 minutes]

Test the product and report bugs as described below. You may report both product bugs and documentation bugs during this period.

Testing instructions for PE and PE-D

a) Launching the JAR file

- Get the jar file to be tested:

- Download the jar file from the team's releases page, if you haven't done this already.

- Download the zip file from the given location, if you haven't done that already.

- Unzip the downloaded zip file with the password (to be given to you at the start of the PE, via LumiNUS gradebook). This will give you another zip file with the name suffix

_inner.zip. - Unzip the inner zip file. This will give you the jar file and other PDF files needed for the PE. Warning: do not run the jar file while it is still inside the zip file.

- Put the downloaded jar file in an empty folder.

- Open a command window. Run the

java -versioncommand to ensure you are using Java 11. - Check the UG to see if there are extra things you need to do before launching the JAR file e.g., download another file from somewhere

You may visit the team's releases page on GitHub if they have provided some extra files you need to download. - Launch the jar file using the

java -jarcommand (do not use double-clicking).

If you are on Windows, use the DOS prompt or the PowerShell (not the WSL terminal) to run the JAR file. - If the product doesn't work at all: If the product fails catastrophically e.g., cannot even launch, or even the basic commands crash the app, contact the invigilator (via MS Teams, and failing that, via Zoom chat) to receive a fallback team to test.

b) What to test

- Test the product based on the User Guide available from their GitHub website

https://{team-id}.github.io/tp/UserGuide.html. - Do system testing first i.e., does the product work as specified by the documentation?. If there is time left, you can do acceptance testing as well i.e., does the product solve the problem it claims to solve?.

- Test based on the Developer Guide (Appendix named Instructions for Manual Testing) and the User Guide. The testing instructions in the Developer Guide can provide you some guidance but if you follow those instructions strictly, you are unlikely to find many bugs. You can deviate from the instructions to probe areas that are more likely to have bugs.

- As before, do both system testing and acceptance testing but give priority to system testing as those bugs can earn you more credit.

c) What bugs to report?

- You may report functionality bugs, UG bugs, and feature flaws.

Admin tP Grading → Functionality Bugs

These are considered functionality bugs:

Behavior differs from the User Guide

A legitimate user behavior is not handled e.g. incorrect commands, extra parameters

Behavior is not specified and differs from normal expectations e.g. error message does not match the error

Admin tP Grading → Feature Flaws

These are considered feature flaws:

The feature does not solve the stated problem of the intended user i.e., the feature is 'incomplete'

Hard-to-test features

Features that don't fit well with the product

Features that are not optimized enough for fast-typists or target users

Admin tP Grading → Possible UG Bugs

These are considered UG bugs (if they hinder the reader):

Use of visuals

- Not enough visuals e.g., screenshots/diagrams

- The visuals are not well integrated to the explanation

- The visuals are unnecessarily repetitive e.g., same visual repeated with minor changes

Use of examples:

- Not enough or too many examples e.g., sample inputs/outputs

Explanations:

- The explanation is too brief or unnecessarily long.

- The information is hard to understand for the target audience. e.g., using terms the reader might not know

Neatness/correctness:

- looks messy

- not well-formatted

- broken links, other inaccuracies, typos, etc.

- hard to read/understand

- unnecessary repetitions (i.e., hard to see what's similar and what's different)

- You can also post suggestions on how to improve the product.

Be diplomatic when reporting bugs or suggesting improvements. For example, instead of criticising the current behavior, simply suggest alternatives to consider.

- Report functionality bugs:

Admin tP Grading → Functionality Bugs

These are considered functionality bugs:

Behavior differs from the User Guide

A legitimate user behavior is not handled e.g. incorrect commands, extra parameters

Behavior is not specified and differs from normal expectations e.g. error message does not match the error

- Do not post suggestions but if the product is missing a critical functionality that makes the product less useful to the intended user, it can be reported as a bug of type

Type.FeatureFlaw.

Admin tP Grading → Feature Flaws

These are considered feature flaws:

The feature does not solve the stated problem of the intended user i.e., the feature is 'incomplete'

Hard-to-test features

Features that don't fit well with the product

Features that are not optimized enough for fast-typists or target users

- You may also report UG bugs.

Admin tP Grading → Possible UG Bugs

These are considered UG bugs (if they hinder the reader):

Use of visuals

- Not enough visuals e.g., screenshots/diagrams

- The visuals are not well integrated to the explanation

- The visuals are unnecessarily repetitive e.g., same visual repeated with minor changes

Use of examples:

- Not enough or too many examples e.g., sample inputs/outputs

Explanations:

- The explanation is too brief or unnecessarily long.

- The information is hard to understand for the target audience. e.g., using terms the reader might not know

Neatness/correctness:

- looks messy

- not well-formatted

- broken links, other inaccuracies, typos, etc.

- hard to read/understand

- unnecessary repetitions (i.e., hard to see what's similar and what's different)

d) How to report bugs

- Post bugs as you find them (i.e., do not wait to post all bugs at the end) because bug reports created/modified after the allocated time will not count.

- Launch CATcher, and login to the correct profile:

- PE Dry Run:

CS2103/T PE Dry run - PE:

CS2103/T PE

- PE Dry Run:

- Post bugs using CATcher.

Issues created for PE-D and PE need to be in a precise format for our grading scripts to work. Incorrectly-formatted responses will have to discarded. Therefore, you are not allowed to use the GitHub interface for PE-D and PE activities, unless you have obtained our permission first.

- Post bug reports in the following repo you created earlier:

- PE Dry Run:

ped - PE:

pe

- PE Dry Run:

- The whole description of the bug should be in the issue description i.e., do not add comments to the issue.

e) Bug report format

- Each bug should be a separate issue.

- Write good quality bug reports; poor quality or incorrect bug reports will not earn credit.

- Use a descriptive title.

- Give a good description of the bug with steps to reproduce, expected, actual, and screenshots. If the receiving team cannot reproduce the bug, you will not be able to get credit for it.

- Assign exactly one

severity.*label to the bug report. Bug report without a severity label are consideredseverity.Low(lower severity bugs earn lower credit)

Bug Severity labels:

severity.VeryLow: A flaw that is purely cosmetic and does not affect usage e.g., a typo/spacing/layout/color/font issues in the docs or the UI that doesn't affect usage.severity.Low: A flaw that is unlikely to affect normal operations of the product. Appears only in very rare situations and causes a minor inconvenience only.severity.Medium: A flaw that causes occasional inconvenience to some users but they can continue to use the product.severity.High: A flaw that affects most users and causes major problems for users. i.e., makes the product almost unusable for most users.

When applying for documentation bugs, replace user with reader.

- Assign exactly one

type.*label to the issue.

Type labels:

type.FunctionalityBug: A functionality does not work as specified/expected.type.FeatureFlaw: Some functionality missing from a feature delivered in v1.4 in a way that the feature becomes less useful to the intended target user for normal usage. i.e., the feature is not 'complete'. In other words, an acceptance-testing bug that falls within the scope of v1.4 features. These issues are counted against the product design aspect of the project.type.DocumentationBug: A flaw in the documentation e.g., a missing step, a wrong instruction, typos

PE Phase 1 - Part II Evaluating Documents [30 minutes]

- This slot is for reporting documentation bugs only. You may report bugs related to the UG and the DG.

- For each bug reported, cite evidence and justify. For example, if you think the explanation of a feature is too brief, explain what information is missing and why the omission hinders the reader.

Admin tP Grading → Possible UG Bugs

These are considered UG bugs (if they hinder the reader):

Use of visuals

- Not enough visuals e.g., screenshots/diagrams

- The visuals are not well integrated to the explanation

- The visuals are unnecessarily repetitive e.g., same visual repeated with minor changes

Use of examples:

- Not enough or too many examples e.g., sample inputs/outputs

Explanations:

- The explanation is too brief or unnecessarily long.

- The information is hard to understand for the target audience. e.g., using terms the reader might not know

Neatness/correctness:

- looks messy

- not well-formatted

- broken links, other inaccuracies, typos, etc.

- hard to read/understand

- unnecessary repetitions (i.e., hard to see what's similar and what's different)

Admin tP Grading → Possible DG Bugs

These are considered DG bugs (if they hinder the reader):

Those given as possible UG bugs ...

These are considered UG bugs (if they hinder the reader):

Use of visuals

- Not enough visuals e.g., screenshots/diagrams

- The visuals are not well integrated to the explanation

- The visuals are unnecessarily repetitive e.g., same visual repeated with minor changes

Use of examples:

- Not enough or too many examples e.g., sample inputs/outputs

Explanations:

- The explanation is too brief or unnecessarily long.

- The information is hard to understand for the target audience. e.g., using terms the reader might not know

Neatness/correctness:

- looks messy

- not well-formatted

- broken links, other inaccuracies, typos, etc.

- hard to read/understand

- unnecessary repetitions (i.e., hard to see what's similar and what's different)

Architecture:

- Symbols used are not intuitive

- Indiscriminate use of double-headed arrows

- e.g., the sequence diagram showing interactions between main componentsarchitecture-level diagrams contain lower-level details

- Description given are not sufficiently high-level

UML diagrams:

- Notation incorrect or not compliant with the notation covered in the module.

- Some other type of diagram used when a UML diagram would have worked just as well.

- The diagram used is not suitable for the purpose it is used.

- The diagram is too complicated.

Code snippets:

- Excessive use of code e.g., a large chunk of code is cited when a smaller extract of would have sufficed.

Problems in User Stories. Examples:

- Incorrect format

- All three parts are not present

- The three parts do not match with each other

- Important user stories missing

Problems in Use Cases. Examples:

- Formatting/notational errors

- Incorrect step numbering

- Unnecessary UI details mentioned

- Missing/unnecessary steps

- Missing extensions

Problems in NFRs. Examples:

- Not really a Non-Functional Requirement

- Not scoped clearly (i.e., hard to decide when it has been met)

- Not reasonably achievable

- Highly relevant NFRs missing

Problems in Glossary. Examples:

- Unnecessary terms included

- Important terms missing

PE Phase 1 - Part III Overall Evaluation [15 minutes]

- To be submitted via TEAMMATES. You are recommended to complete this during the PE session itself, but you have until the end of the day to submit (or revise) your submissions.

Important questions included in the evaluation:

Evaluate based on the User Guide and the actual product behavior.

| Criterion | Unable to judge | Low | Medium | High |

|---|---|---|---|---|

target user |

Not specified | Clearly specified and narrowed down appropriately | ||

value proposition |

Not specified | The value to target user is low. App is not worth using | Some small group of target users might find the app worth using | Most of the target users are likely to find the app worth using |

optimized for target user |

Not enough focus for CLI users | Mostly CLI-based, but cumbersome to use most of the time | Feels like a fast typist can be more productive with the app, compared to an equivalent GUI app without a CLI | |

feature-fit |

Many of the features don't fit with others | Most features fit together but a few may be possible misfits | All features fit together to for a cohesive whole |

Evaluate based on fit-for-purpose, from the perspective of a target user.

For reference, the AB3 UG is here.

Evaluate based on fit-for-purpose from the perspective of a new team member trying to understand the product's internal design by reading the DG.

For reference, the AB3 DG is here.

0..20] e.g., if you give 8, that means the team's effort is about 80% of that spent on creating AB3. We expect most typical teams to score near to 10.

- Do read the DG appendix named

Effort, if any. - Consider implementation work only (i.e., exclude testing, documentation, project management etc.)

- Do not give a high value just to be nice. Your responses will be used to evaluate your effort estimation skills.

PE Phase 2: Developer Response

Deadline: Tue, Nov 17th 2359

This phase is for you to respond to the bug reports you received.

Duration: The review period will start around 1 day after the PE (exact time to be announced) and will last for 2-3 days. However, you are recommended to finish this task ASAP, to minimize cutting into your exam preparation work.

Bug reviewing is recommended to be done as a team as some of the decisions need team consensus.

Instructions for Reviewing Bug Reports

- Don't freak out if there are lot of bug reports. Many can be duplicates and some can be false positives. In any case, we anticipate that all of these products will have some bugs and our penalty for bugs is not harsh. Furthermore, it depends on the severity of the bug. Some bug may not even be penalized.

- CATcher does not come with a UG, but the UI is fairly intuitive (there are tool tips too). Do post in the forum if you need any guidance with its usage.

- Also note that CATcher hasn't been battle-tested for this phase, in particular, w.r.t. multiple team members editing the same issue concurrently. It is ideal if the team members get together and work through the issues together. If you think others might be editing the same issues at the same time, use the

Syncbutton at the top to force-sync your view with the latest data from GitHub.

CS2103/T PE. It will show all the bugs assigned to your team, divided into three sections:

Issues Pending Responses- Issues that your team has not processed yet.Issues Responded- Your job is to get all issues to this category.Faulty Issues- e.g., Bugs marked as duplicates of each other, or causing circular duplicate relationships. Fix the problem given so that no issues remain in this category.

You must use CATcher. You are strictly prohibited from editing PE bug reports using the GitHub Web interface as it can can render bug reports unprocessable by CATcher, sometimes in an irreversible ways, and can affect the entire class. Please contact the prof if you are unable to use CATcher for some reason.

- If a bug seems to be for a different product (i.e. wrongly assigned to your team), let us know ASAP.

- If the bug is reported multiple times,

- Mark all copies EXCEPT one as duplicates of the one left out (let's call that one the original) using the

A Duplicate oftick box. - For each group of duplicates, all duplicates should point to one original i.e., no multiple levels of duplicates, and no cyclical duplication relationships.

- If the duplication status is eventually accepted, all duplicates will be assumed to have inherited the

type.*andseverity.*from the original.

- Mark all copies EXCEPT one as duplicates of the one left out (let's call that one the original) using the

- Apply one of these labels (if missing, we assign:

response.Accepted)

Response Labels:

response.Accepted: You accept it as a bug.response.NotInScope: It is a valid issue but not something the team should be penalized for e.g., it was not related to features delivered in v1.4.response.Rejected: What tester treated as a bug is in fact the expected behavior, or the tester was mistaken in some other way.response.CannotReproduce: You are unable to reproduce the behavior reported in the bug after multiple tries.response.IssueUnclear: The issue description is not clear. Don't post comments asking the tester to give more info. The tester will not be able to see those comments because the bug reports are anonymous.

- Apply one of these labels (if missing, we assign:

type.FunctionalityBug)

Type labels:

type.FunctionalityBug: A functionality does not work as specified/expected.type.FeatureFlaw: Some functionality missing from a feature delivered in v1.4 in a way that the feature becomes less useful to the intended target user for normal usage. i.e., the feature is not 'complete'. In other words, an acceptance-testing bug that falls within the scope of v1.4 features. These issues are counted against the product design aspect of the project.type.DocumentationBug: A flaw in the documentation e.g., a missing step, a wrong instruction, typos

- If you disagree with the original severity assigned to the bug, you may change it to the correct level.

Bug Severity labels:

severity.VeryLow: A flaw that is purely cosmetic and does not affect usage e.g., a typo/spacing/layout/color/font issues in the docs or the UI that doesn't affect usage.severity.Low: A flaw that is unlikely to affect normal operations of the product. Appears only in very rare situations and causes a minor inconvenience only.severity.Medium: A flaw that causes occasional inconvenience to some users but they can continue to use the product.severity.High: A flaw that affects most users and causes major problems for users. i.e., makes the product almost unusable for most users.

When applying for documentation bugs, replace user with reader.

- If you need the teaching team's inputs when deciding on a bug (e.g., if you are not sure if the UML notation is correct), post in the forum. Remember to quote the issue number shown in CATcher (it appears at the end of the issue title).

Some additional guidelines for bug triaging:

- Broken links in UG/DG: Severity can be low or medium depending on how many such cases and how much inconvenience they cause to the reader.

- UML notation variations caused by the diagramming tool: Can be rejected if not contradicting the standard notation (as given by the textbook) i.e., extra decorations that are not misleading.

If the same problem is reported for multiple diagrams, can be flagged as duplicates.

Omitting optional notations is not a bug as long it doesn't hinder understanding. - Minor typos and grammar errors: These are still considered as

severity.VeryLowtype.DocumentationBugbugs (even if it is in the actual UI) which carry a very tiny penalty. - How to prove that something is 'not in scope': In general, features not-yet-implemented (and hence, not in scope) are the features that have a lower priority that the ones that are implemented already. In addition, the following (at least one) can be used to prove that a feature was left out deliberately because it was not in scope:

- The UG specifies it as not supported or coming in a future version.

- The user cannot attempt to use the missing feature or when the user does so, the software fails gracefully, possibly with a suitable error message i.e., the software should not crash.

- If a missing feature is essential for the app to be reasonably useful, its omission can be considered a feature flaw even if it can be proven as not in scope as given in the previous point.

- If a bug report contains multiple bugs (i.e., despite instructions to the contrary, a tester included multiple bugs in a single bug report), you have to choose one bug and ignore the others. If there are valid bugs, choose from valid bugs. Among the choices available, choose the one with the highest severity (in your opinion). In your response, mention which bug you chose.

- How to decide the severity of bugs related to missing requirements (e.g., missing user stories)? Depends on the potential damage the omission can cause. Keep in mind that not documenting a requirement increases the risk of it not getting implemented in a timely manner (i.e., future developers will not know that feature needs to be implemented).

- You can reject bugs that you i.e., the current behavior is same as AB3 and you had no reason to change it because the feature applies similarly to your new productinherited from AB3.

- Decide who should fix the bug. Use the

Assigneesfield to assign the issue to that person(s). There is no need to actually fix the bug though. It's simply an indication/acceptance of responsibility. If there is no assignee, we will distribute the penalty for that bug (if any) among all team members.- If it is not easy to decide the assignee(s), we recommend (but not enforce) that the feature owner should be assigned bugs related to the feature, Reason: The feature owner should have defended the feature against bugs using automated tests and defensive coding techniques.

-

As far as possible, choose the correct

type.*,severity.*,response.*, assignees, and duplicate status even for bugs you are not accepting or marking as duplicates. Reason: your non-acceptance or duplication status may be rejected in a later phase, in which case we need to grade it as an accepted/non-duplicate bug. -

Justify your response. For all of the following cases, you must add a comment justifying your stance. Testers will get to respond to all those cases and will be double-checked by the teaching team in later phases. Indiscriminate/unreasonable dev/tester responses, if deemed as a case of trying to game the system, will be penalized.

- downgrading severity

- non-acceptance of a bug

- changing the bug type

- non-obvious duplicate

- You can also refer to the below guidelines:

Admin tP Grading → Grading bugs found in the PE

Grading bugs found in the PE

- Of Developer Testing component, based on the bugs found in your code3A and System/Acceptance Testing component, based on the bugs found in others' code3B above, the one you do better will be given a 70% weight and the other a 30% weight so that your total score is driven by your strengths rather than weaknesses.

- Bugs rejected by the dev team, if the rejection is approved by the teaching team, will not affect marks of the tester or the developer.

- The penalty/credit for a bug varies based on the severity of the bug:

severity.High>severity.Medium>severity.Low>severity.VeryLow - The three types (i.e.,

type.FunctionalityBug,type.DocumentationBug,type.FeatureFlaw) are counted for three different grade components. The penalty/credit can vary based on the bug type. Given that you are not told which type has a bigger impact on the grade, always choose the most suitable type for a bug rather than try to choose a type that benefits your grade. - The penalty for a bug is divided equally among assignees.

- Developers are not penalized for duplicate bug reports they received but the testers earn credit for duplicate bug reports they submitted as long as the duplicates are not submitted by the same tester.

- i.e., the same bug reported by many testersObvious bugs earn less credit for the tester and slightly higher penalty for the developer.

- If the team you tested has a low bug count i.e., total bugs found by all testers is low, we will fall back on other means (e.g., performance in PE dry run) to calculate your marks for system/acceptance testing.

- Your marks for developer testing depends on the bug density rather than total bug count. Here's an example:

nbugs found in your feature; it is a big feature consisting of lot of code → 4/5 marksnbugs found in your feature; it is a small feature with a small amount of code → 1/5 marks

- You don't need to find all bugs in the product to get full marks. For example, finding half of the bugs of that product or 4 bugs, whichever the lower, could earn you full marks.

- Excessive incorrect downgrading/rejecting/marking as duplicatesduplicate-flagging, if deemed an attempt to game the system, will be penalized.

PE Phase 3: Tester Response

Start: Within 1 day after Phase 2 ends.

While you are waiting for Phase 3 to start, comments will be added to the bug reports in your /pe repo, to indicate the response each received from the receiving team. Please do not edit any of those comments or reply to them via the GitHub interface. Doing so can invalidate them, in which case the grading script will assume that you agree with the dev team's response. Instead, wait till the start of the Phase 3 is announced, after which you should use CATcher to respond.

Deadline: Fri, Nov 20th 2359

- In this phase you will get to state whether you agree or disagree with the dev team's response to the bugs you reported. If a bug reported has been subjected to any of the below by the receiving dev team, you can record your objections and the reason for the objection.

- not accepted

- severity downgraded

- bug type changed

- As before, consider carefully before you object to a dev team's response. If many of your objections were overruled by the teaching team later, you will lose marks for not being able to evaluate a bug report properly.

- If you disagree with the team's decision but would like to revise your own initial type/severity/response as well, you can state that in your explanation e.g., you rated the bug

severity.Highand the team changed it toseverity.Lowbut now you think it should beseverity.Medium. - You can also refer to the below guidelines:

Admin PE → Phase 2 → Additional Guidelines for Bug Triaging

Some additional guidelines for bug triaging:

- Broken links in UG/DG: Severity can be low or medium depending on how many such cases and how much inconvenience they cause to the reader.

- UML notation variations caused by the diagramming tool: Can be rejected if not contradicting the standard notation (as given by the textbook) i.e., extra decorations that are not misleading.

If the same problem is reported for multiple diagrams, can be flagged as duplicates.

Omitting optional notations is not a bug as long it doesn't hinder understanding. - Minor typos and grammar errors: These are still considered as

severity.VeryLowtype.DocumentationBugbugs (even if it is in the actual UI) which carry a very tiny penalty. - How to prove that something is 'not in scope': In general, features not-yet-implemented (and hence, not in scope) are the features that have a lower priority that the ones that are implemented already. In addition, the following (at least one) can be used to prove that a feature was left out deliberately because it was not in scope:

- The UG specifies it as not supported or coming in a future version.

- The user cannot attempt to use the missing feature or when the user does so, the software fails gracefully, possibly with a suitable error message i.e., the software should not crash.

- If a missing feature is essential for the app to be reasonably useful, its omission can be considered a feature flaw even if it can be proven as not in scope as given in the previous point.

- If a bug report contains multiple bugs (i.e., despite instructions to the contrary, a tester included multiple bugs in a single bug report), you have to choose one bug and ignore the others. If there are valid bugs, choose from valid bugs. Among the choices available, choose the one with the highest severity (in your opinion). In your response, mention which bug you chose.

- How to decide the severity of bugs related to missing requirements (e.g., missing user stories)? Depends on the potential damage the omission can cause. Keep in mind that not documenting a requirement increases the risk of it not getting implemented in a timely manner (i.e., future developers will not know that feature needs to be implemented).

- You can reject bugs that you i.e., the current behavior is same as AB3 and you had no reason to change it because the feature applies similarly to your new productinherited from AB3.

Admin tP Grading → Grading bugs found in the PE

Grading bugs found in the PE

- Of Developer Testing component, based on the bugs found in your code3A and System/Acceptance Testing component, based on the bugs found in others' code3B above, the one you do better will be given a 70% weight and the other a 30% weight so that your total score is driven by your strengths rather than weaknesses.

- Bugs rejected by the dev team, if the rejection is approved by the teaching team, will not affect marks of the tester or the developer.

- The penalty/credit for a bug varies based on the severity of the bug:

severity.High>severity.Medium>severity.Low>severity.VeryLow - The three types (i.e.,

type.FunctionalityBug,type.DocumentationBug,type.FeatureFlaw) are counted for three different grade components. The penalty/credit can vary based on the bug type. Given that you are not told which type has a bigger impact on the grade, always choose the most suitable type for a bug rather than try to choose a type that benefits your grade. - The penalty for a bug is divided equally among assignees.

- Developers are not penalized for duplicate bug reports they received but the testers earn credit for duplicate bug reports they submitted as long as the duplicates are not submitted by the same tester.

- i.e., the same bug reported by many testersObvious bugs earn less credit for the tester and slightly higher penalty for the developer.

- If the team you tested has a low bug count i.e., total bugs found by all testers is low, we will fall back on other means (e.g., performance in PE dry run) to calculate your marks for system/acceptance testing.

- Your marks for developer testing depends on the bug density rather than total bug count. Here's an example:

nbugs found in your feature; it is a big feature consisting of lot of code → 4/5 marksnbugs found in your feature; it is a small feature with a small amount of code → 1/5 marks

- You don't need to find all bugs in the product to get full marks. For example, finding half of the bugs of that product or 4 bugs, whichever the lower, could earn you full marks.

- Excessive incorrect downgrading/rejecting/marking as duplicatesduplicate-flagging, if deemed an attempt to game the system, will be penalized.

- This phase is optional. If you do not respond to a dev response, we'll assume that you agree with it.

- Procedure:

- When the phase has been announced as open, login to CATcher as usual (profile:

CS2103/T PE).

You may use the latest version of CATcher or the Web version of CATcher. - For each issues listed in the

Issues Pending Responsessection:,- Go to the details, and read the dev team's response.

- If you disagree with any of the items listed, tick on the

I disagreetick box and enter your justification for the disagreement, and clickSave. - If you are fine with the team's changes, click

Savewithout any other changes upon which the issue will move to theIssue Respondedsection.

- Note that only bugs that require your response will be shown by CATcher. Bugs already accepted as reported by the team will not appear in CATcher as there is nothing for you to do about them.

You must use CATcher. You are strictly prohibited from editing PE bug reports using the GitHub Web interface as it can can render bug reports unprocessable by CATcher, sometimes in an irreversible ways, and can affect the entire class. Please contact the prof if you are unable to use CATcher for some reason.

PE Phase 4: Tutor Moderation

- In this phase tutors will look through all dev responses you objected to in the previous phase and decide on a final outcome.

- In the unlikely case we need your inputs, the tutor will contact you.